From a recent conversation with a customer, I noticed even some of the experienced DBAs are not very clear about the terms “unallocated space” and “unused space” and by doing a quick Bing search, found there are many SQL friends out there trying to get a clear correlation between these SQL Server terms: unallocated space, unused space, and reserved.

Today, let us try to get a clear picture (infact, a picture!) of these terms using sp_spaceused system stored procedure and at the same time understanding results for database size information.

Execute the below query on AdventureWorks2012 sample database

USE [AdventureWorks2012]

GO

EXEC sp_spaceused

GO

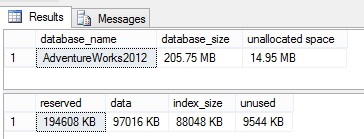

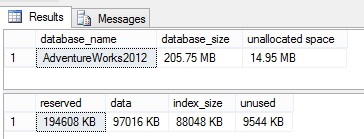

Results:

Now, let us understand the above result sets, lets check by the column names

- database_size: database size (data files + log files) = 205.75 MB

- unallocated space: space that is not reserved for use by database objects (Space Available) = 14.95 MB

- reserved: space that is reserved for use by database objects = 190.05 MB

- data: total space used by data = 97016 KB/1024 = 94.74 MB

- index_size: total space used by indexes = 88048 KB/1024 = 85.99 MB

- unused: portion of the reserved space, which is not yet used = 9544 KB/1024 = 9.32 MB

- used: let us coin this word to simplify things. say used = data + index_size = 94.74 + 85.99 = 180.73 MB

We now know what these columns are and the numbers they are returning. But, here is the real confusion part: How are they correlated with each other?

To answer this, below is the simple formulae I came up with to better interpret the results. I haven’t found this simple explanation clearly anywhere else on SQL space (trust me, you won’t)

(color coded to read easy)

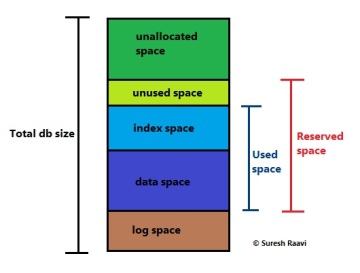

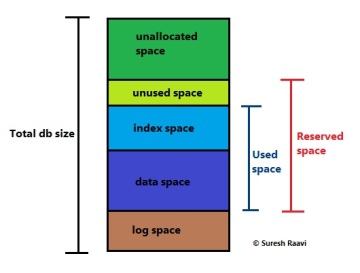

used = data + index_size

reserved = used + unused

database_size = reserved + unallocated space + log space

To cross verify, we can substitute the result set we got from AdventureWorks2012 database in the above formula and confirm by checking the log file size. Alternatevely, we can calculate the log file size, which is not returned from the ‘sp_spaceused’ result set.

180.73 = 94.74 + 85.99 –>TRUE

190.05 = 180.43 + 9.32 –> TRUE

205.75 = 190.05 + 14.95 + log space

Hence log space = 205.75 – (190.05+14.95) = 0.75 MB (which is right!)

And, below is my small art in the same color coding as above to illustrate in a picture format. Of course, who doesn’t love pictures, and I am no exception -:)

Read Full Post »