Before we get started on this topic, here is a quick fact..in SQL Server 2000, there used to be hard limit on the data that can be stored in a single row, which is 8,060 bytes. So, if the data exceeds this limit, the update or insert operation would fail!

Fortunately, in later SQL Server versions, rows are dynamically managed to exceed this limit and the combined width of the row can now exceed the 8,060 byte limit. I wanted to refresh this in our memory as this will help us to better understand the allocation units concept.

What are Allocation Units in SQL Server:

Every partition in a SQL Server table can contain 3 types of data, each stored on its own set of pages. And each of these types of pages is called an Allocation Unit. Below are the 3 types of Allocation Units.

- IN_ROW_DATA

- ROW_OVERFLOW_DATA

- LOB_DATA

So, an Allocation Unit is basically just a set of particular type of pages. Now, let us try to understand each of these allocation units using a demo.

When the row size stays within the 8,060-byte limit, SQL Server stores all of the data in the IN_ROW_DATA allocation unit and usually this unit holds the majority of data in most of the applications.

To better explain the concept, I came up with this simple Demo:

--Create a sample db AllocationUnitsDemo

USE master

GO

CREATE DATABASE AllocationUnitsDemo

GO

--Cretae a sample table ProductDetails in the AllocationUnitsDemo db

--Total length of the row in this table is 1000 + 4000 = 5000 (< 8000)

Use AllocationUnitsDemo

GO

CREATE TABLE ProductDetails

(

ProductName varchar(1000),

ProductDesc varchar (4000),

)

GO

--Check the allocation unit type

Use AllocationUnitsDemo

GO

SELECT type_desc, total_pages, used_pages,data_pages

FROM sys.allocation_units

WHERE container_id = (SELECT partition_id FROM sys.partitions

WHERE OBJECT_ID = OBJECT_ID('ProductDetails'))

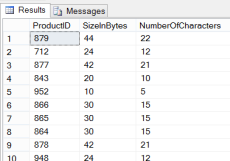

Results:

Remember the introduction? so, when the row exceeds the 8,060-byte limit, SQL Server then moves one or more of the variable-length columns to pages in the ROW_OVERFLOW_DATA allocation unit.

We still have a limitation here for the row size. Though the combined width of the row can exceed the 8,060 byte limit, the individual width of the columns must be within the limit of 8,000 bytes. This means we can have a table with two columns defined as nvarchar(5000), nvarchar(5000), but we are not allowed nvarchar(10000)

Demo Continued..

--Add an extra column to the above table ProductDetails

--Make the total length of the row to become 5000 + 4000 = 9000 (>8000)

Use AllocationUnitsDemo

GO

ALTER TABLE ProductDetails ADD ProductSummary nvarchar(4000)

--Now, Check the allocation unit type

Use AllocationUnitsDemo

GO

SELECT type_desc, total_pages, used_pages,data_pages

FROM sys.allocation_units

WHERE container_id = (SELECT partition_id FROM sys.partitions

WHERE OBJECT_ID = OBJECT_ID('ProductDetails'))

Results:

If a column with LOB data type is defined, then SQL Server uses the LOB_DATA allocation unit. To know what data types are considered LOB and to get the list of LOB columns from a database, please refer my previous post: “SQL Server – Find all the LOB Data Type Columns in a Database Using T-SQL Script”

Demo Continued..

--Add LOB data type column to the table ProductDetails

Use AllocationUnitsDemo

GO

ALTER TABLE ProductDetails ADD ProductImage Image

--Again, Check the allocation unit type

Use AllocationUnitsDemo

GO

SELECT type_desc, total_pages, used_pages,data_pages

FROM sys.allocation_units

WHERE container_id = (SELECT partition_id FROM sys.partitions

WHERE OBJECT_ID = OBJECT_ID('ProductDetails'))

Results:

--Cleanup

Use master

GO

DROP DATABASE AllocationUnitsDemo

How many Allocation Units can a Table have?

It actually depends on the number of partitions and indexes on the table.

To simplify the concept, as shown in the below picture, assume there is one table having no indexes (HEAP) and no partitions. Having no partitions mean, all of the table’s contents are stored in a single partition, meaning every table has at-least 1 partition.

Based on the above, we can have upto 3 allocation units for a table with no partitions and no indexes. And how about if we have partitions and Indexes? Below is the formula I came up with to get the maximum possible number of allocation units per table.

- No of Allocation Units = No of Partitions × No of Indexes × 3

So, as we see from the figures above, a table can have up to 45 million allocation units in SQL Server 2012!

Read Full Post »