An auto-growth event is the process by which the SQL Server engine expands the size of a database file when it runs out of space. The amount by which a database file should grow is determined by the value that is set in the SQL configuration. While every organization has its own standards for this setting, however there are some widely followed best practices like for instance the auto-growth setting should not be in percent value

In this post I have put down two scripts that you can customize per your organizational standards. The first script helps you to identify the current settings and the second one can be used to update auto-growth settings. Both the scripts are targeted for all the databases by default, however you can call out the databases that you want to exclude from this change. Please make sure that you test these changes before pushing out to production servers.

Script 1: Identify the current database settings

select DB_NAME(mf.database_id) database_name , mf.name logical_name , CONVERT (DECIMAL (20,2) , (CONVERT(DECIMAL, size)/128)) [file_size_MB] , CASE mf.is_percent_growth WHEN 1 THEN 'Yes' ELSE 'No' END AS [is_percent_growth] , CASE mf.is_percent_growth WHEN 1 THEN CONVERT(VARCHAR, mf.growth) + '%' WHEN 0 THEN CONVERT(VARCHAR, mf.growth/128) + ' MB' END AS [growth_in_increment_of] , CASE mf.is_percent_growth WHEN 1 THEN CONVERT(DECIMAL(20,2), (((CONVERT(DECIMAL, size)*growth)/100)*8)/1024) WHEN 0 THEN CONVERT(DECIMAL(20,2), (CONVERT(DECIMAL, growth)/128)) END AS [next_auto_growth_size_MB] , CASE mf.max_size WHEN 0 THEN 'No growth is allowed' WHEN -1 THEN 'File will grow until the disk is full' ELSE CONVERT(VARCHAR, mf.max_size) END AS [max_size] , physical_name from sys.master_files mf

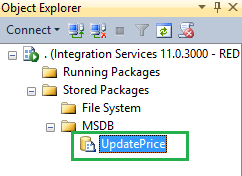

Script 2: Update database auto-growth values

/* ****MODIFICATION REQUIRED for AUTOGROWTH -- See line 64 below**** 1) Use this script to change the auto growth setting of for all databases 2) If you want to exclude any database add the DBs in the WHERE Clause -- See line 50 below 3) Tested in 2012 and 2014 SQL Servers */ IF EXISTS(SELECT name FROM sys.sysobjects WHERE name = N'ConfigAutoGrowth' AND xtype='U') DROP TABLE ConfigAutoGrowth GO CREATE TABLE DBO.ConfigAutoGrowth ( iDBID INT, sDBName SYSNAME, vFileName VARCHAR(max), vGrowthOption VARCHAR(12) ) PRINT 'Table ConfigAutoGrowth Created' GO -- Inserting data into staging table INSERT INTO DBO.ConfigAutoGrowth SELECT SD.database_id, SD.name, SF.name, CASE SF.status WHEN 1048576 THEN 'Percentage' WHEN 0 THEN 'MB' END AS 'GROWTH Option' FROM SYS.SYSALTFILES SF JOIN SYS.DATABASES SD ON SD.database_id = SF.dbid GO -- Dynamically alters the file to set auto growth option to fixed mb DECLARE @name VARCHAR ( max ) -- Database Name DECLARE @dbid INT -- DBID DECLARE @vFileName VARCHAR ( max ) -- Logical file name DECLARE @vGrowthOption VARCHAR ( max ) -- Growth option DECLARE @Query VARCHAR(max) -- Variable to store dynamic sql DECLARE db_cursor CURSOR FOR SELECT idbid,sdbname,vfilename,vgrowthoption FROM configautogrowth --WHERE sdbname NOT IN ( 'master' ,'msdb' ) --<<--ADD DBs TO EXCLUDE --AND vGrowthOption = 'Percentage' or 'Mb' OPEN db_cursor FETCH NEXT FROM db_cursor INTO @dbid,@name,@vfilename,@vgrowthoption WHILE @@FETCH_STATUS = 0 BEGIN PRINT 'Changing AutoGrowth option for database:- '+ UPPER(@name) /******If you want to change the auto growth size to a different value then just modify the filegrowth value in script below *********/ SET @Query = 'ALTER DATABASE '+ '[' + @name + ']' +' MODIFY FILE (NAME = '+ '[' +@vFileName + ']' +',FILEGROWTH = 5MB)' --<<--ADD AUTOGROWTH SIZE HERE EXECUTE(@Query) FETCH NEXT FROM db_cursor INTO @dbid,@name,@vfilename,@vgrowthoption END CLOSE db_cursor -- Closing the curson DEALLOCATE db_cursor -- deallocating the cursor GO -- Querying system views to see if the changes are applied DECLARE @SQL VARCHAR(8000), @sname VARCHAR(3) SET @SQL=' USE [?] SELECT ''?'' [Dbname] ,[name] [Filename] ,CASE is_percent_growth WHEN 1 THEN CONVERT(VARCHAR(5),growth)+''%'' ELSE CONVERT(VARCHAR(20),(growth/128))+'' MB'' END [Autogrow_Value] ,CASE max_size WHEN -1 THEN CASE growth WHEN 0 THEN CONVERT(VARCHAR(30),''Restricted'') ELSE CONVERT(VARCHAR(30),''Unlimited'') END ELSE CONVERT(VARCHAR(25),max_size/128) END [Max_Size] FROM [?].sys.database_files' IF EXISTS(SELECT 1 FROM tempdb..sysobjects WHERE name='##Fdetails') DROP TABLE ##Fdetails CREATE TABLE ##Fdetails (Dbname VARCHAR(50),Filename VARCHAR(50), Autogrow_Value VARCHAR(15),Max_Size VARCHAR(30)) INSERT INTO ##Fdetails EXEC sp_msforeachdb @SQL SELECT * FROM ##Fdetails ORDER BY Dbname --Dropping the staging table DROP TABLE ConfigAutoGrowth GO